#The Pokemon Firm apologizes for Van Gogh Museum collaboration mess

Table of Contents

The Pokemon Firm apologizes for Van Gogh Museum collaboration mess

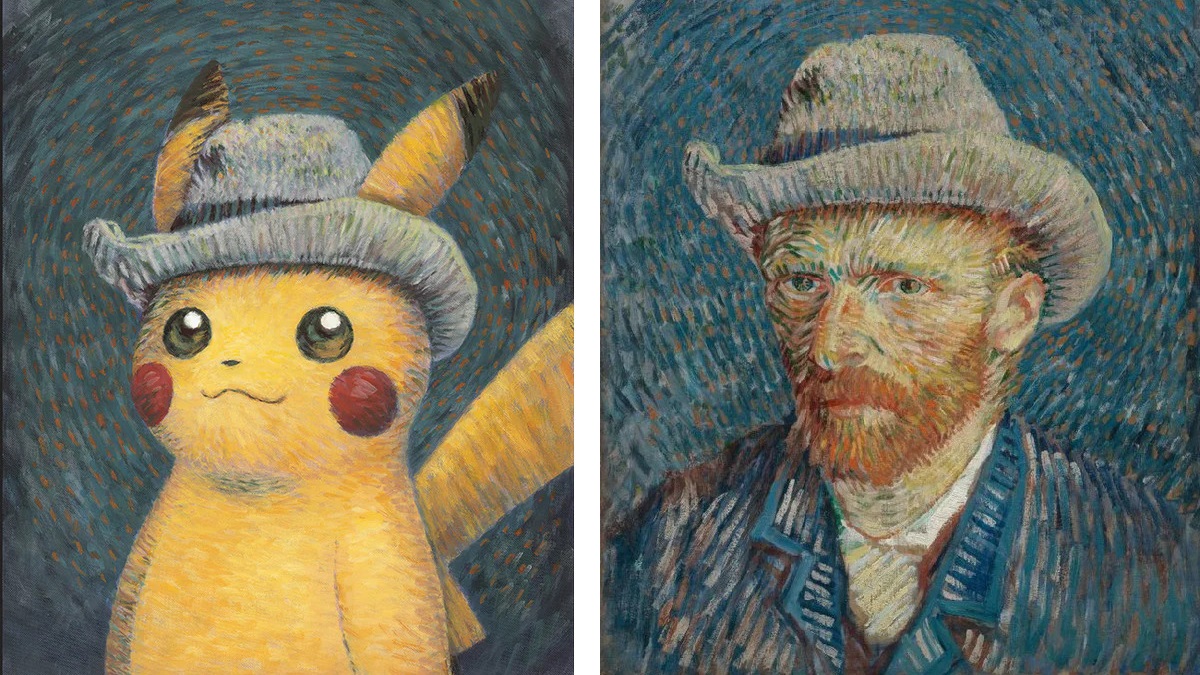

For these unaware, Pokemon and Amsterdam’s Van Gogh Museum are internet hosting an in-person collaboration till January 7, 2024. The occasion launched with Pokemon memorabilia, and purchases included a restricted version promo card. Pokemon’s TCG featured “Pikachu with Gray Felt Hat,” primarily based on the well-known “Self-Portrait with Gray Felt Hat” by Vincent van Gogh. I do know, it’s cute!

However, as a result of exclusivity of the occasion — it’s unlikely that each Pokemon fan can journey to Amsterdam, in spite of everything — the corporate supplied followers elsewhere purchasing alternate options. People in america, United Kingdom, and Canada may rating their very own promo card just by buying an merchandise from the Pokemon x Van Gogh Museum Assortment on the Pokemon Middle on-line retailer. That didn’t go as deliberate, leaving the corporate to apologize because the playing cards and restricted goodies offered out.

“Because of overwhelming demand, all our merchandise from this assortment have offered out,” learn @Pokemon’s tweet. “We perceive that is disappointing to many who have been trying to our official e-mail and social media channels for steering on how and when to buy.”

Hmm. I ponder why this occurred? It couldn’t be as a result of Pokemon is — say it with me — massively well-liked, may it? As ordinary, giving the web technique of acquiring unique gadgets brings scalpers out of the woodwork shopping for up the lot. On the intense aspect, the corporate mentioned it’s exploring choices to make this proper for followers who missed out.

“We’re actively engaged on methods to supply extra “Pikachu with Gray Felt Hat” promo playing cards for followers purchasing at Pokémon Middle sooner or later. Particulars will likely be launched at a later date,” the publish concluded.

It’s not the primary time scalpers rapidly purchased up items meant for followers, and it definitely gained’t be the final. We’ve seen every thing from cheaper gadgets like Metroid Prime bodily copies to complete consoles marked up within the grasping rush. Anyway, right here’s to hoping these of you who need a card can get one.